Assignment | City As Site Research | Week 10

Tuesday, April 9th 2019, 10:11:56 pm

Background

For the past few weeks, I’ve been reading a variety of articles and books on the application of augmented reality and other forms of unique human computer interactions. My focus has broadly been on the application of these technologies to make public spaces “annotated” in some way for those who are either impaired or unable to access information in the same way as others. From this broad concept, I decided to focus my research on a single public space that I spent a good chunk of each day in: the NYC subway.

The Status Quo

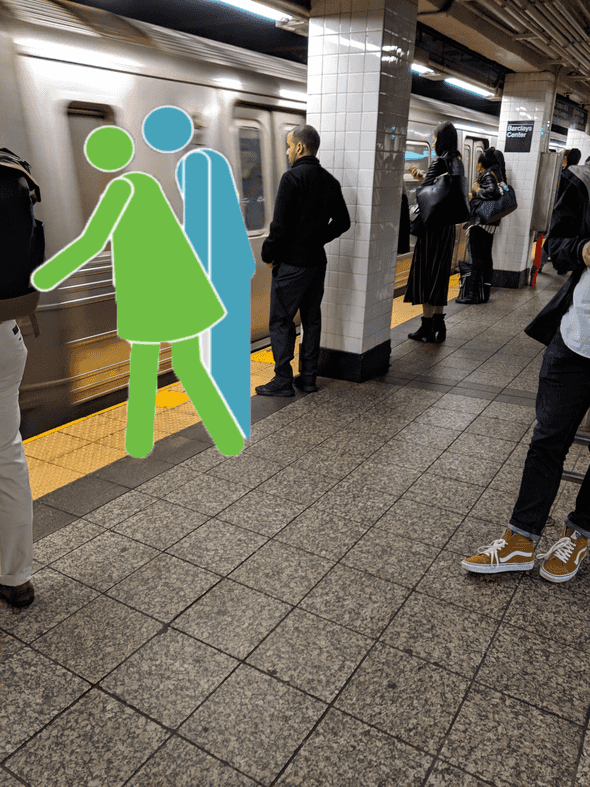

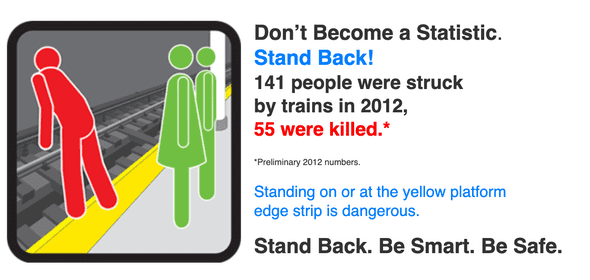

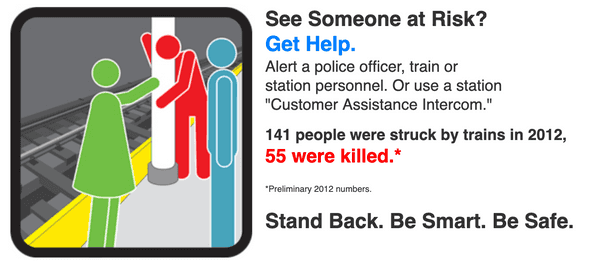

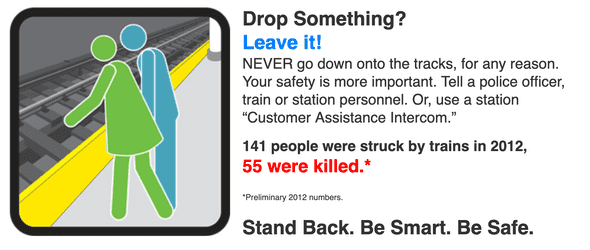

In its present state, the subway actually has a large amount of “low tech” annotations. These take the form of posters or announcements played through loud speakers. The MTA does a great job of translating posters into multiple languages, however, that doesn’t entirely mean non-literate speakers of other languages will have access to their message. Thankfully, the MTA’s designers have done a great job of illustrating most of their ideas:

Fig. 1a (via MTA)

Fig. 1a (via MTA)

Fig. 1b (via MTA)

Fig. 1b (via MTA)

Fig. 1c (via MTA)

Fig. 1c (via MTA)

The posters in Fig 1 use vivid colors and carefully written copy in order to draw the attention of subway straphangers. In some cases, these are even translated to other languages to make them even more accessible, for example Fig. 2.

Fig 2 (via MTA)

Fig 2 (via MTA)

However, there are limits to how accessible these posters are.

- They only target a handful of languages, all of them relatively mainstream.

- They assume literacy in the target reader.

- They rely on cultural cues such as red/green colors meaning go/stop.

- They can be difficult to see for those with certain forms of colorblindness.

These four problems can be addressed through technology.

Overlay Images

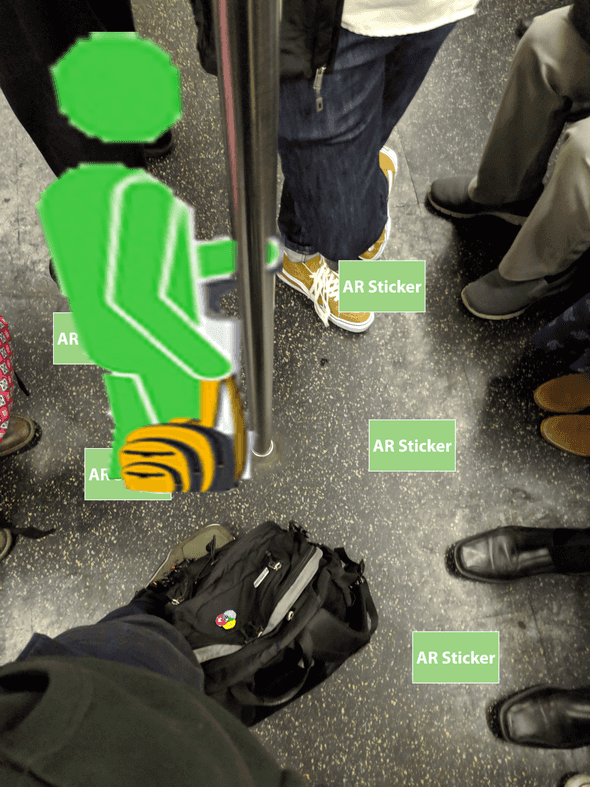

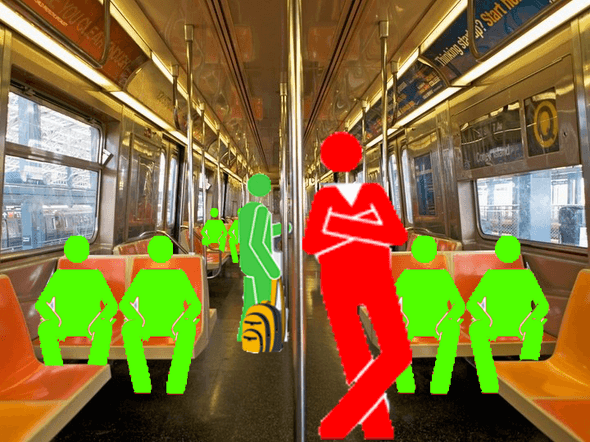

The concepts from these signs can be translated into digital annotations that can be applied to screens such as mobile phones using augmented reality technologies. This technique, taken from educational applications is called adding Overlay Images to the real world:

Fig 3b (photo via Conde Nast’s Traveler)

Fig 3b (photo via Conde Nast’s Traveler)

The goal here is to show some of the same informational messages provided by posters and announcements in a more accessible way that’s provided directly by the screens or devices of straphangers. These overlays in Fig 3 can easily be “localized” for their own language trivially or adjusted colorwise for the visually impaired. These types of Overlay Images can be updated easily, animated and can be adapted to be more animated and playful for general audiences and be made easily more accessible to audiences with specific accessibility needs.

Implementation

Implementing these annotations can be done in a number of ways combining newer augmented reality APIs available for most mobile devices. First would be utilizing trigger images placed in the environment ahead of time to simplify and stabilize digital image placements in the app viewport as in Fig 4 below.

These AR Stickers in Fig 4 can interat with Augmented Reality APIs as places where digital images and animations can be placed in the environment.

(via Google Education)

This is how many current education applications work right now, but the process could be adapted for a more broad definition of education. Education as a concept is broadly though of in terms of schools and academic topics such as math, language or the sciences. However, when taken on a micro scale each of these AR experiences in public spaces can be thought of as micro-education opportunities that are in fact more modern and accessible than strictly print-based solutions.

Project Prototype Concept

Based on these research results above, my goals and deliverables would be as follows:

-

Build a prototype of an application that provides these “guides” using augmented reality for a single space such as the subway.

-

Create a series of context-agnostic sticker designs to allow the image triggers to scale out and function in nearly any environment around the city. (Though I’d still preserve a consistency across them visually.)

-

Deploy stickers in a single space (such as a subway platform) and record a demonstration of how the application could provide guides.

Written by Omar Delarosa who lives in Brooklyn and builds things using computers.